Business Intelligence on the Cloud Data Platform: Approaches to Schemas

The cloud data platform combines data warehouse and data lake capabilities to support the exploding world of analytics. Like a data warehouse, the cloud data platform structures, transforms, and queries data. Like a data lake, it classifies multi-structured data objects in an elastic object store. The cloud data platform provides an ideal launchpad for modern business intelligence (BI) projects that need fast, flexible access to lots of varied data.

As you might expect, this is a tall order to fill. The cloud data platform must support rigorous requirements that include the following:

- Flexibility of data access: Data engineers and data analysts need to explore, search, discover, and query data from many angles.

- Performance: They need to access that data with low latency, high throughput, and high concurrency.

- Ease of transformation: They need to reduce complexity and processing overhead—and therefore cost—as they transform data for analytics.

To meet these requirements, data teams need a cloud data platform that structures data in multiple ways. It must create and apply schemas at different points in the data lifecycle, depending on the requirements and use case. This blog summarizes the pros and cons of two traditional approaches: schema on write and schema on read. Keep an eye out in the next couple of weeks for a companion blog in which Thomas Hazel, CTO and founder of ChaosSearch, will propose a new “third way” that leverages virtual schemas and compressed indexing.

Read: IT Professionals Reveal Cloud Data Platform Highs and Lows of 2021

What is a schema?

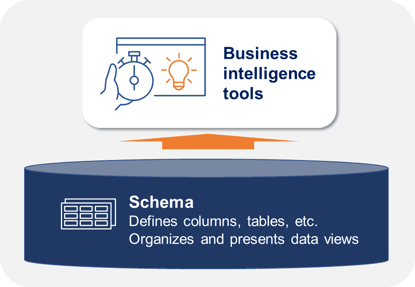

A schema is the structure definition that ties data together. It defines columns, tables, and views, as well as the ways in which these and other elements relate to one another. Schemas organize and present views of data to BI tools such as ThoughtSpot, Tableau, and Qlik for analytics. Without a schema, data remains unstructured, which makes it harder to query and analyze.

The Schema

Let’s explore how cloud data platforms apply the approaches of schema on write and schema on read.

Schema on write

Schema on write comes from the traditional data warehouse. With this approach, the data engineer creates a schema when they load or “write” data to a target such as a cloud data platform. When they create the schema, they also need to transform the data to align with it. The ETL (extract, transform, and load) and ELT (extract, load, then transform) methods of ingesting data can both support schema on write. Once transformed to fit the schema, the data then stands ready for the data analyst’s BI tool to read and query it whenever they like.

Schema on write works well for structured data that already has a schema from the source, and therefore just needs adjusting via filtering, merging, or reformatting. Schema on write makes it easier for data analysts to explore and discover data for traditional BI projects such as operational dashboards and reports. By transforming the data up front, this approach also can accelerate subsequent queries by BI tools. However, upfront transformation might reduce flexibility by limiting the types of BI queries that are possible. It also can require complex effort up front that increases cost. Cloud data platforms such as Amazon Redshift and Snowflake support schema on write.

Schema on write can improve BI query performance, but reduce flexibility.

It also requires effort and creates cost up front.

Schema on read

This approach, inherited from the data lake, does not apply the schema until the analyst needs to read the data. When that time comes, the data engineer with a data analyst creates codes for the schema. Then they transform the data—possibly creating a new copy—to fit the schema they require. Finally, the BI tool reads and queries the transformed data. ELT can support schema on read, with the transform (i.e., the T) happening only when the data analyst needs it.

The schema on read approach works well for diverse data from many sources, including semi-structured data that data analysts want to explore and discover in its native format. For example, this might include email messages that have descriptive tags rather than formal schema when they arrive in the cloud data platform. Schema on read also works well for multiple data analysts with evolving requirements, who might want to apply different schemas at different times. While it provides flexible support for evolving BI needs, schema on read can slow query performance. It also might require complex effort and therefore create cost when different use cases require different schemas and data copies. The query service Amazon Athena and (once again) Snowflake support schema on read.

Schema on read can provide flexibility, but reduce BI query performance.

It can require complex effort and create cost.

Tying it all together

So does modern BI require schema on write, schema on read, or something new? The short answer is “yes.” To support diverse BI use cases and requirements, the modern enterprise needs a cloud data platform that has both approaches. But there is no silver bullet. Schema on write can be fast but less flexible, while schema on read can be slower but more flexible. Both approaches can cost time, effort, and money.

As you might expect, smart innovators continue to devise new approaches to this problem. Enter Thomas Hazel, who proposes his new approach in a companion blog, coming soon.

Additional Resources

Read the Blog: 3 Use Cases for Relational Access to Log Data

Watch the Webinar: Choosing an Analytical Cloud Data Platform: Trends, Strategies & TechConsiderations

Listen to the Podcast: Solve a Problem, Change the World w/ Amr Awadallah

Check out the Whitepaper: Beyond Observability: The Hidden Value of Log Analytics