How Log Analytics Powers Cloud Operations: Three Best Practices for CloudOps Engineers

At the turn of the 20th Century, enterprises shut down their clunky generators and started buying electricity from new utilities such as the Edison Illuminating Company.

In doing so, they cut costs, simplified operations, and made profound leaps in productivity.

The promise of modern cloud computing invites easy comparisons to those first electric utilities: outsource to them, save money and simplify.

Alas, Jeff Bezos is not Thomas Edison, and cloud computing delivers incremental benefits rather than profound leaps. For starters, you cannot outsource all your on-premises systems to a cloud provider, thanks to technical debt, data gravity and sovereignty laws. What you do outsource can generate surprisingly high cloud compute bills. In addition, you still need to carefully manage your application performance and govern your data on the cloud—and connect back to persistent on-premises infrastructure. The results of your transition to the cloud, while promising, depend on how well you manage these challenges.

The cross-functional discipline of Cloud operations (CloudOps) helps enterprises manage these challenges and drive results.

CloudOps encapsulates the processes and tools you need to run applications, services, workloads, and infrastructure on public platforms offered by AWS, Microsoft Azure, and Google.

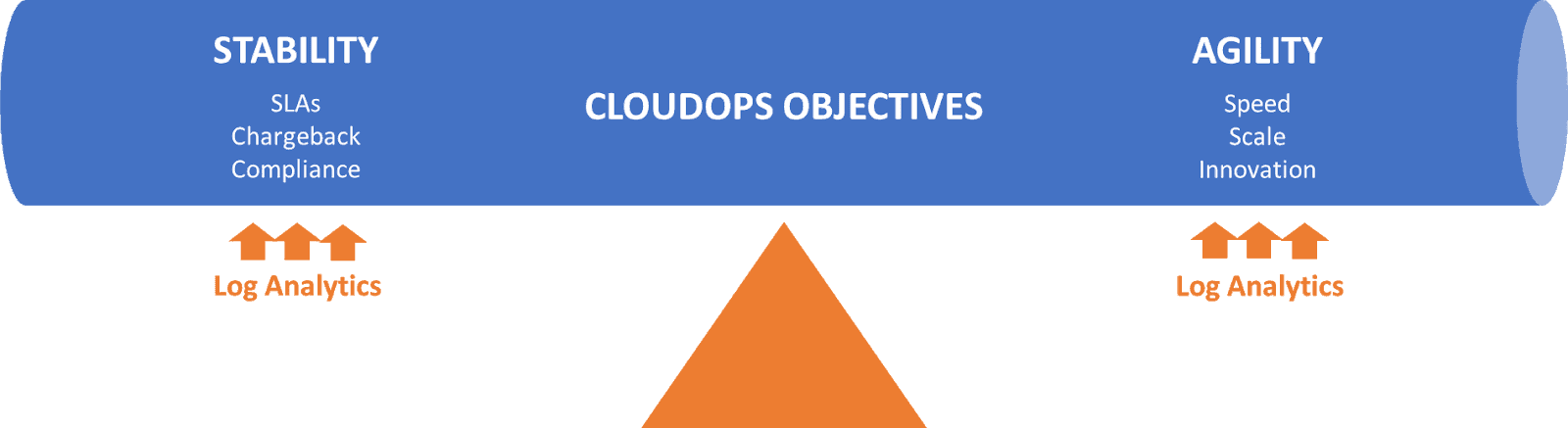

CloudOps seeks to achieve two classic, sometimes opposing, goals of IT: stability and agility.

- Stability. CloudOps engineers configure resources, utilize capacity, and monitor and respond to issues that arise. They apply consistent methods in order to meet service level agreements, support chargeback efforts, and assist compliance. These tasks and methods derive from the discipline of ITOps, which seeks to achieve the same goals of stability for on-premises environments.

- Agility. CloudOps helps enterprises continuously deliver cloud applications and updates to users. They address urgent market demands, innovate and rapidly release competitive enhancements. These tasks and methods derive from the discipline of DevOps, which streamlines software development and operations processes as enterprises scale.

To strike this balance between stability and agility, you must understand the intricate workings of your IT components on the cloud. Enter log analytics.

How Log Analytics is the Key to Cloud Operations

Log Analytics Defined

Each day, the average enterprise’s cloud applications, containers, compute nodes, and other components throw off millions of tiny logs.

Each log is a file whose data describes an event such as a user action, service request, application task, or compute error. Logs also capture messages that applications and other components send to one another.

You perform log analytics when you ingest, transform, search, and analyze all those files of events and messages. Enterprises use log analytics to address a wide range of use cases, including customer engagement, IT security, and cloud operations.

READ: Breaking the Logjam of Log Analytics

Using Log Analytics in Cloud Operations

CloudOps engineers that analyze their logs effectively can achieve both stability and agility with cloud operations.

You can maintain stability by optimizing performance, controlling costs, and governing data usage.

You can stay agile by responding to events that require speed, scale, or innovation.

Log analytics provides the key to unlocking the promise of cloud computing.

Effective Log Analytics Provides Enterprises Both Stability and Agility with CloudOps

Enterprises that achieve these twin objectives with CloudOps adopt consistent best practices. Let’s explore three common best practices.

1. Automate

Kibana, Grafana, and numerous other visualization tools automate the process for you to monitor, explore, search, query, and analyze logs across your cloud environment. In addition, various open-source or commercial tools can help you automate the ingestion, indexing, and transformation of all those logs. CloudOps teams that replace manual scripting with a GUI can speed their log analytics projects and empower more people to handle them. They can further improve productivity by configuring automated alerts—and automated filters of those alerts over time—to reduce the time spent overseeing operations on the cloud.

2. Collaborate

CloudOps engineers should collaborate with peers such as ITOps and DevOps engineers given their common goals and heritage. These groups can teach one another about their pitfalls and lessons learned. Consider establishing a center of excellence that fosters a common methodology among these groups—and educates other users throughout the enterprise about the most efficient, effective ways to perform log analytics.

3. Architect for Scale

The traditional “ELK Stack” (referring to the open-source tools of Elasticsearch, Logstash, and Kibana) depends on the Lucene database, whose bulky indexing methods can create bottlenecks in log processing. ELK Stack users try all kinds of workarounds to ease that pain. They reduce the supply of logs by restricting their ingestion or slashing retention periods. They reduce the consumption of logs by giving users quotas, throttling complex queries, or killing queries that take more than a few minutes. Things should not be that hard. If you use a log-management platform that indexes logs more efficiently, you can reduce or eliminate these processing bottlenecks—and the need for workarounds.

Neither cloud computing nor CloudOps are a panacea.

But as with all aspects of technology, they depend on vigilance and smart action.

Enterprises that take automated, collaborative, and scalable approaches to log analytics can power successful CloudOps initiatives.

To learn more, join the webinar “Why and How Log Analytics Makes Cloud Operations Smarter” with Eckerson Group and ChaosSearch on Wednesday, July 14th.